To Boldly Test Where No One Has Gone Before

February 15, 2019

[test-driven-design testing Lately, I’ve been reading a fresh crop of "TDD is terrible" posts. Here's my counter argument: Test Driven Development is "liberating." Here's how I use TDD as a design tool.

Begin personal rant without any factual data to back it up. Also known as “in my experience” I remember my early days at Pivotal Labs, pairing on a heavy JavaScript project. While my Ruby-fu was pretty good, my JS was rusty, to say the least. We sat down at the editor and I vapor-locked. Not a good way to start the morning. I will always remember that discomfort. It felt wrong. I was doing TDD as it is commonly perceived: “Write a test. Watch it go red. Make it green. Refactor. Repeat.” That’s not very creative. Instead, it was mechanical, cold, sterile. It’s very frustrating when you’re stuck. So, I hear y’all with all the feels.

Here’s what I learned later from Martin Fowler, Sandi Metz, Sarah Mei, Kent Beck and others. TDD is about making discoveries and design choices. Now my sessions start with, “What do we know? What’s the most important thing to learn next. What direction do we want to go? What’s the smallest productive step?” TDD is about exploration. That’s much more interesting. We’re going on an adventure.

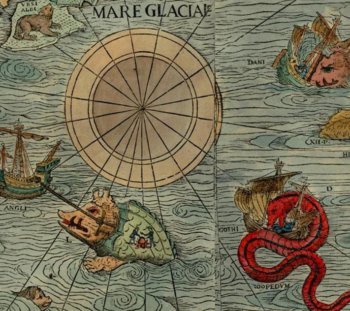

Carta marina, a wallmap of Scandinavia. Olaus Magnus, 1539. The caption reads: Marine map and Description of the Northern Lands and of their Marvels, most carefully drawn up at Venice in the year 1539 through the generous assistance of the Most Honourable Lord Hieronymo Quirino. Of course, like any adventure, the first steps can be scary. You don’t know if you’re prepared or not. What happens if something goes horribly wrong? TDD is a methodology to help you move from a place of certainty to the next, safely, without crashing. I use TDD to test my assumptions and my intentions, “I want this module to do X. What do I need? Data? State? Process?” Another analogy that I like to use: You know all those old navigation charts that had colorful drawings at the margins: Here be dragons? TDD allows you to move from the known world to the unknown with safety. It expands the frontiers of our knowledge. It also gives you a path of breadcrumbs back if you find yourself at a dead end (or falling off the edge of the world into infinite waterfalls).

Lots of things that people might say aren’t TDD-ish, are, in my book, totally TDD. Using a REPL (read-eval-print-loop) to poke at existing code or APIs is TDD. Spiking some code to get a feel for the shape of things is TDD. Really. It’s all tests. It drives your design. Eventually, however, you’re out there and a crash-and-burn risks blowing up the development effort. That is when you “down shift” and “add structure;” that is, start writing the familiar red-green-refactor test cycles. When easing people into TDD, I start with “Tell me, in your own words, what you want this code to do.” The usual response is an overly complicated, multi-step solution to the whole problem. I then say, “Whoa cowboy! What’s the simplest statement that you can make?” The answer, usually, is “calling method X returns Y” to which I say, “Yay! Let’s write just that test.”

But, but, but what about all that other stuff? Take notes about the other stuff. Scribble it down on note cards. Add it as tasks to the story in Pivotal Tracker. Post-its, notebook, napkins, whatevs. Just dump all those distractions out of your 7±2 short term memory stack. Focus on the test in front of you. The test fails. Yay! Who would have thought that failure could be so liberating? We have a problem to solve. Red-Green-Refactor. Boom! Our knowledge of the problem domain has inched ever so slightly toward our destination (we hope, mostly, probably, I trust my experience). One test usually leads to the next, or a few more. It depends on where we are in the process. Early on, there are a lot of questions, but as we progress, and the solution set narrows, so do the design questions.

Or, we’ve gone down a garden path or two. Our tests have taken us to a place where we can’t solve the problem. That’s okay, too. Our tools allow us to roll back to a known safe point where we can try a different solution path. That’s not failure, that’s Research & Development FTW! Yes, I do sound like a giggling loon when we have to literally delete code that we just spent hours writing. Which would you rather have though? If it were easy to solve every software problem, there’d probably be an AI doing it already. Most software problems have to deal with humans: Mushy, imperfect, inconsistent, unpredictable, sometimes irrational, lovely humans. In comparison, quantum physics seems easy.just kidding Software that models human behavior is going to be tricky. So TDD helps me explore the boundaries of my knowledge, pushing the envelope outward, until, reliably, the edge hits the destination.

We’re not done yet, but take a look back at your accomplishments. The code works. You can show it your stakeholders. You can test it in front of users. You can get feedback at the business value level, “Is this even worth it?” Meanwhile, you write tests to battle-harden the code: Edge conditions, input validation, synchronization, persistence. It’s that reliability that we’ll always find a solution (or at least discover how badly we underestimated the scope of the problem!) that keeps TDD at the top of my tool-bag. At the end of the day, I can always answer the question,

Are we done?